Introduction

his book focuses on the application of generative AI for software engineering. While some of the ideas I'm going to discuss are specific to my field, some of them apply to other applications of generative AI as well. The core principles of accountability, traceability, and governance are relevant whenever we are using probabilistic tools to produce critical outputs.

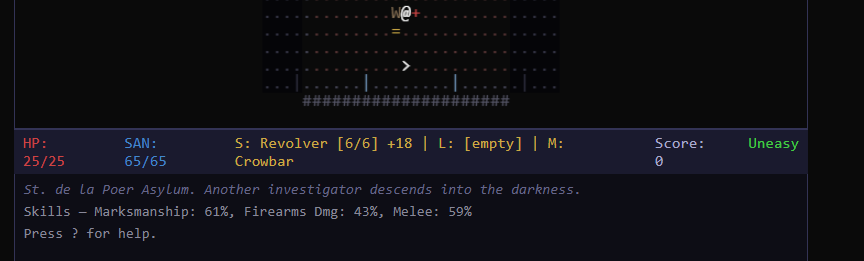

Creating or modifying computer software has never been this quick, easy or fun. So I've added a backstory (or a sidetrack) for this book about a long-postponed hobby project, a roguelike game clone. I did it entirely using generative AI and freely available tools. Just a couple of years ago I wouldn't have even started because of the effort and time required. This is not supposed to be a wow factor here, or the "look how many lines of code you produce in a week" demo, but just a little showcase of how much you can do with the tools available today in a short period of time.

You'll recognize my take-home lessons from this little project from the following formatting.

I'd always just wanted to add ranged attacks with aiming to Nethack, but never had the time for that. But rot.js, Opencode and also this little book project gave me some motivation.

Let's dig a bit deeper.

Free code

As the price of code goes down, the expensive parts of software delivery become more visible. Right?

Think coordinating multiple developers working on the same system. Or ensuring that generated code actually solves the right problem and does not invent any non-existing ones in place or introduce new ones. And doing this while maintaining quality standards when the volume of things to verify and check goes through the roof.

A rigorous study by METR (2025) found that experienced open-source developers using AI tools actually took 19% longer to complete tasks. What was noteworthy was that they had predicted a 24% speedup beforehand, and still believed AI had helped afterward despite the opposite being true. The gap between perception and reality is significant, and Chapter 3 examines this and other evidence in detail.

Google's DORA reports tell a nuanced story at organizational scale: the 2024 data showed AI adoption correlating with lower throughput, while the 2025 sequel found that penalty had flipped, though delivery instability still goes up. Chapter 3 examines this and other evidence in detail.

Within the past year we have much better capabilities and emerging disciplines at our disposal that allow us to raise our abstraction level from the code to the requirement. I'm about to discuss this transition, often referred to (much to my dismay) as Specification-Driven Development (SDD) later in this book. It's essentially about moving the abstraction level from code to requirements, planning and verification.

The gap between perception and reality matters. Organizations are making strategic decisions about AI adoption based on impressions that may not match the facts on the ground, which might lead to disappointment and disillusionment when the expected productivity gains do not materialize. One of the goals of this book is about avoiding that trap.

In the software bubble the situation on the ground is still like after the first hot contact with the enemy. Many are restructuring workflows around tools that may be creating coordination overhead faster than they reduce implementation time. I might very well be one of them. Engineers are developing habits—like deferring to AI suggestions, skimming generated code—that may degrade quality over time, and for sure, they don't have the same grip as they would in the old world.

The bottom line here is that we're now at a point where individual productivity gains do not automatically translate to team productivity gains. Unless we do something about it or wait for somebody to create that magical software factory. You know the one which will just churn out (basically brute force) your app/solution/whatever with little to no involvement from you.

The evidence for AI coding productivity is conflicting. My take on this is that the initial speed and lots of printout seems good at first, but end cleanup and troubleshooting in the end tends to take equal or more time than the traditional debug-fix-test cycle.

The evidence gap

Beyond the METR study cited above, the evidence for AI coding productivity is mixed and methodologically weak. Many studies measure tasks that are not representative of real software development work, use toy problems, short time horizons, or metrics like lines of code that do not correlate with delivered value. Perhaps unsurprisingly, studies sponsored by AI vendors tend to show larger benefits than independent research.

This does not mean AI tools are worthless or cannot be astonishingly useful. Many of them (and I've used quite a few since the early days) are impressive, and many developers find them genuinely helpful. But it does mean we should be skeptical of claims about 10x productivity or revolutionary transformation. The evidence does not support such claims, and organizations that bet heavily on them may be disappointed.

The probabilistic challenge

Large Language Models (LLMs) are probabilistic systems. They generate outputs based on statistical patterns in their training data, not on logical deduction or formal verification. This means they can produce excellent code, terrible code, or subtly wrong code that looks excellent on first inspection. Same prompt with exact same sources might yield totally different results on the next run.

Software engineering, by contrast, needs determinism. A given input should produce the same output. Edge cases should be handled consistently. Behavior should be predictable and verifiable. A good rule of thumb is that any deduction, sequence or data processing you can easily do the old way should be done that way. Just use GenAI to develop that solution.

This conflict is fundamental, not incidental. The probabilistic nature of LLMs is not a bug to be fixed, and we won't get entirely deterministic LLMs. What we can do is design processes that manage the probabilistic output in ways that produce reliable outcomes. This is a major theme of this book.

Chapter 5 explores this challenge in depth: the step size principle, probability management through specification, and why smaller, bounded tasks produce more reliable results than open-ended generation. The core insight is that we are not trying to eliminate probabilistic variation—we are trying to constrain it to spaces where variation produces acceptable outcomes.

The response

What I'm going to present in this book is a practical, learnable approach to AI-assisted software delivery. I try to address issues like coordination, correctness, and accountability. The principles of my 'framework' (note the quotes, this is not TOGAF, Scrum or SAFe 3.0) rest on three simple controls to tame the probabilism and create a reliable delivery process, or set the foundation for a 'software factory'.

| Mechanism | What It Does |

|---|---|

| Gates | Controls which agent is allowed to work next, and on what. Enforced transitions between stages prevent wandering and keep things focused and traceable. |

| Tracking artifacts | Record what was planned, built, tested, and approved. Machine-readable specifications tied to specific backlog items, providing the single source of truth. |

| Human checkpoints | Hard stops in the state machine. Validate understanding before code generation, validate implementation before delivery, and retain authority over what enters production. |

Together, these mechanisms create accountability without sacrificing the speed that makes AI tools valuable. Let the AI work freely inside gates and get involved to make the decisions that matter. The result is something you can trace, review, and actually explain to someone, even when most of the code is generated by AI.

How this book is organized

Part I lays out the problem space: the cognitive overload facing teams, what the research actually shows, the field of "software factory" attempts, and the compound probability problem that makes multi-agent pipelines unreliable without structure.

Part II addresses the human side: how developer roles change when AI writes the code, what new skills matter, and why organizations resist new ways of working.

Part III presents the governed delivery approach: accountability as the goal, the pipeline mechanics, architecture as probability management, SDD, and where you need to stay involved.

Part IV is the practitioner's field guide: open problems, getting started, context engineering, tool selection, legacy modernization, and closing the feedback loop.

The book closes with a look at where software engineering is heading and what this moment means for our profession.

This book has been designed, perhaps optimistically, to be read from beginning to end, but feel free to jump around. The core principles are introduced early and then applied throughout, so you can pick the sections most relevant to your current challenges. The field guide in Part IV is meant to be a practical reference for implementation details.

Your reading companion: AI Bullshit Bingo

Before we begin, here's a little interactive companion for your next AI strategy meeting, keynote, or LinkedIn doom-scroll. You might find it also entertaining while reading this book. TIP: If you're reading the web version of the book, just open a new tab for it!